Microsoft’s VASA-1 AI video generation system can make lifelike avatars that speak volumes from a single photo

Microsoft’s VASA-1 generates a video from a photo and an audio clip

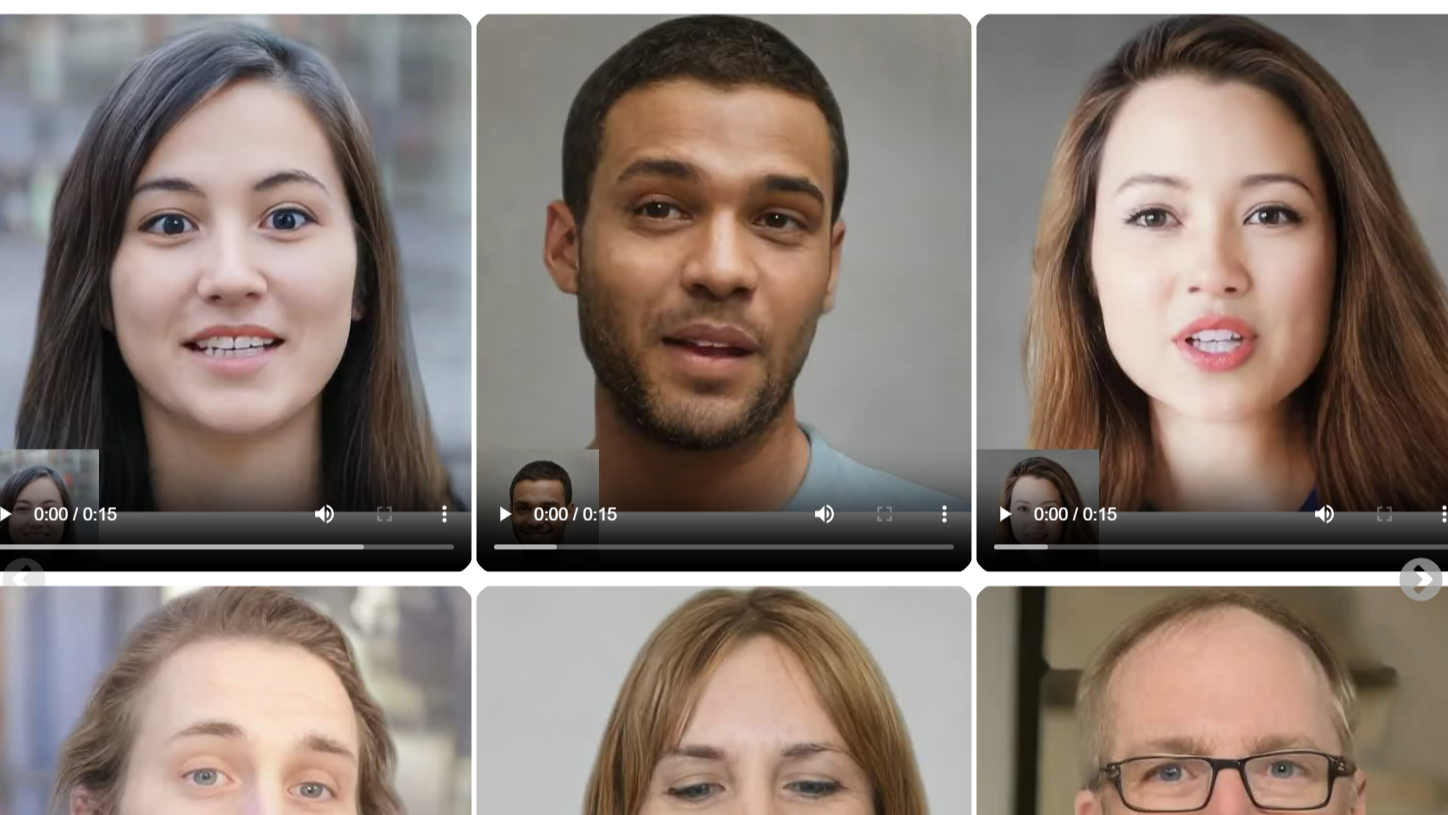

AI-generated video is already a reality, and now another player has joined the fray: Microsoft. Apparently, the tech giant has developed a generative AI system that can whip up realistic talking avatars from a single picture and an audio clip. The tool is named VASA-1, and it goes beyond mimicking mouth movement; it can capture lifelike emotions and produce natural-looking movements as well.

The system offers its user the ability to modify the subject’s eye movements, the distance the subject is being perceived at, and the emotions expressed. VASA-1 is the first model in what is rumored to be a series of AI tools, and MSPowerUser reports that it can conjure up specific facial expressions, synchronize lip movements to a high degree, and produce human-like head motions.

It can offer a wide range of emotions to choose from and generate facial subtleties, which sounds like it could make for a scarily convincing result.

How VASA-1 works and what it's capable of

Seemingly taking a note from how human 3D animators and modelers work, VASA-1 makes use of a process it calls ‘disentanglement,’ allowing the system to control and edit the facial expressions, 3D head position, and facial features independently of each other, and this is what powers VASA-1’s realism.

As you might be imagining already, this has seismic potential, offering the possibility to totally change our experiences of digital apps and interfaces. According to MSPowerUser, VASA-1 can produce videos unlike those that it was trained on. Apparently, the system wasn’t trained on artistic photos, singing voices, or non-English speech, but if you request a video that features one of these, it’ll oblige.

The Microsoft researchers behind VASA-1 praise its real-time efficiency, stating that the system can make fairly high-resolution videos (512×512 pixels) with high frame rates. Frame rate, or frames per second (fps), is the frequency at which a series of images (referred to as frames) can be captured or displayed in succession within a piece of media. The researchers claim that VASA-1 can generate videos with 45fps in offline mode, and 40fps with online generation.

You can check out the state of VASA-1 and learn more about it on Microsoft’s dedicated webpage for the project. It has several demonstrations and includes links to download information about it, ending with a section headlined ‘Risks and responsible AI considerations.’

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Works like magic - but is it a miracle spell or a recipe for disaster?

In this final reflective section, Microsoft acknowledges that a tool like this has plentiful scope for misuse, but the researchers try to emphasize the potential positives of VASA-1. They’re not wrong; a technology like this could mean next-level educational experiences that are available to more students than ever before, better assistance to people who have difficulties communicating, the capability to provide companionship, and improved digital therapeutic support.

All of that said, it would be foolish to ignore the potential for harm and wrongdoing with something like this. Microsoft does state that it doesn’t currently have plans to make VASA-1 available in any form to the public until it’s reassured that “the technology will be used responsibly and in accordance with proper regulations.” If Microsoft sticks to this ethos, I think it could be a long wait.

All in all, I think it’s becoming hard to deny that generative AI video tools are going to become more commonplace and the countdown to when they saturate our lives has begun. Google has been working on an analogous AI system with the moniker VLOGGER, and also recently put out a paper detailing how VLOGGER can create realistic videos of people moving, speaking, and gesturing with the input of a single photo.

OpenAI also made headlines recently by introducing its own AI video generation tool, Sora, which can generate videos from text descriptions. OpenAI explained how Sora works on a dedicated page, and provided demonstrations that impressed a lot of people - and worried even more.

I am wary of what these innovations will enable us to do, and I’m glad that, as far as we know, all three of these new tools are being kept tightly under wraps. I think realistically the best guardrails we have against the misuse of technologies like these are airtight regulations, but I’m doubtful that all governments will take these steps in time.

YOU MIGHT ALSO LIKE...

Kristina is a UK-based Computing Writer, and is interested in all things computing, software, tech, mathematics and science. Previously, she has written articles about popular culture, economics, and miscellaneous other topics.

She has a personal interest in the history of mathematics, science, and technology; in particular, she closely follows AI and philosophically-motivated discussions.