The Pixel 6's AI camera tricks explained – and why they're a threat to Photoshop

The AI photo editing arms race is hotting up

Google's Pixel phones have been the spark behind today's incredible computational photography revolution – and the new Pixel 6 and Pixel 6 Pro look to once again break new imaging ground with some impressive new camera tricks.

The two phones have powerful camera hardware, including 50MP wide cameras and 12MP ultrawides (with 4x optical zoom on the Pixel 6 Pro). But it's actually their new software features, driven by Google's new Tensor chip, that are the most interesting part of the Pixel range's photographic story.

Google has previously established its camera supremacy in areas like Night Sight, but this time the approach is a little different. The Pixel 6 and Pixel 6 Pro have modes designed to overcome limitations in the hardware, your skill as a photographer and even in the scene you’re trying to capture.

These new modes are Magic Eraser, Face Unblur and Motion mode. They offer effects you can recreate in Adobe Photoshop and comparable image editing suites, but require none of the know-how. Or a pricey subscription to Adobe’s Creative Suite. Or even a laptop.

So do these new Google Pixel camera tricks undercut the AI photo-editing power Adobe is hastily building into Photoshop? Here we’re going to look at how each of these tools works, how promising they are, and the ways in which they may even surpass Photoshop’s tools.

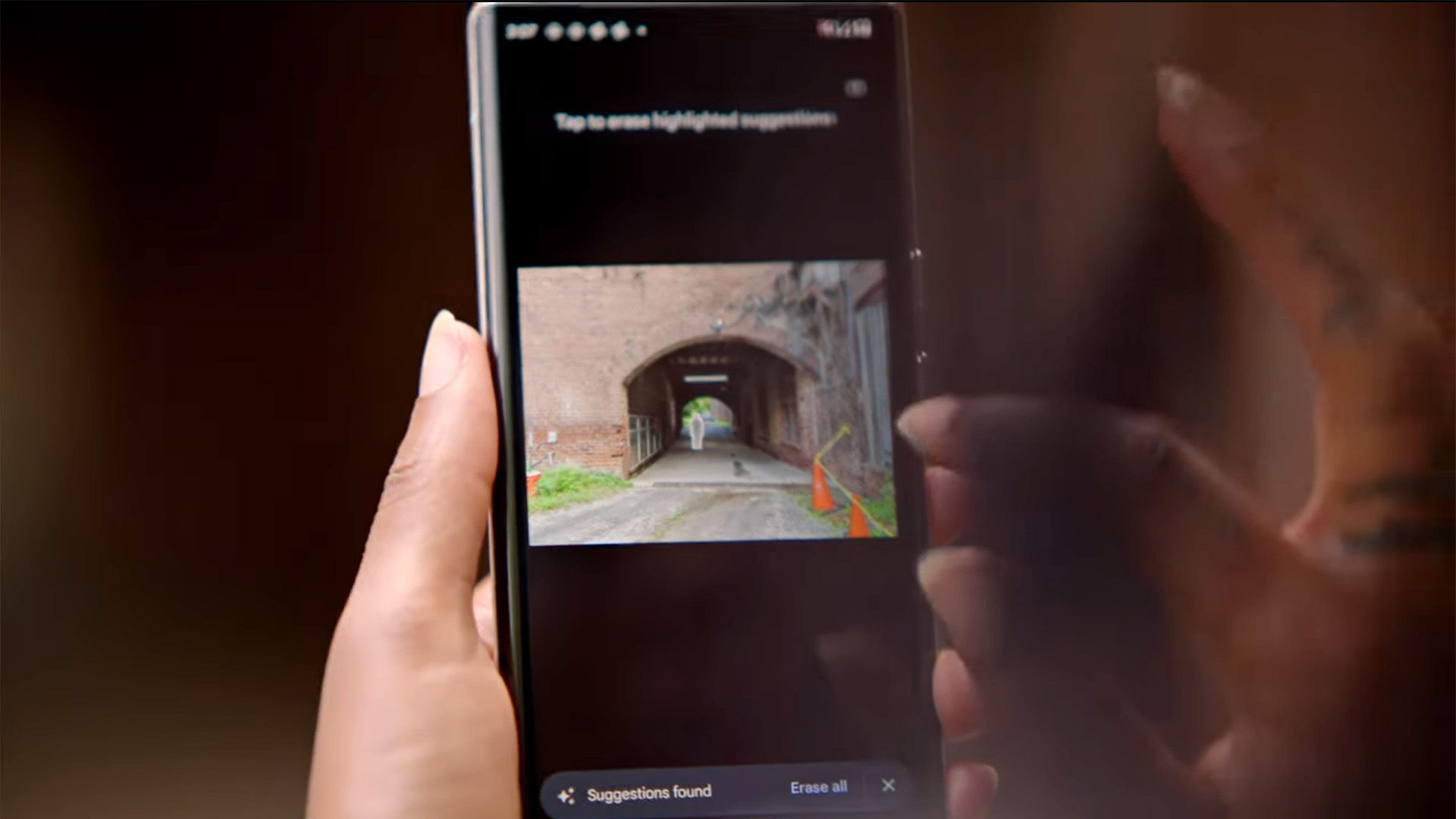

What is the Magic Eraser?

Magic Eraser is probably the most interesting of the Pixel 6’s imaging tools for keen photographers. This tool lets you remove objects or people from a picture you've already taken.

This trick is nothing new. It’s an alternative to Photoshop's Heal brush, and there is a similar tool in Google’s Snapseed – in fact, it's been there since 2017.

Get daily insight, inspiration and deals in your inbox

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Google's version is a little different, though. In Snapseed you have to manually select the area you want to remove. It then analyzes the surrounding image data to fill in the gap, generally blocking it in with the textures it finds nearby.

Magic Eraser suggests objects you can remove from a scene. You then select them, or just hit a button to remove them all. On paper, it’s a much more user-friendly take on the concept.

Will Magic Eraser be any good?

Let’s be blunt – Snapseed’s Heal tool is rubbish, at least compared to the excellent Photoshop alternative. It frequently results in obviously repeated texture patterns, which look fake, and often leaves unnatural-looking splodges of image information that don’t make much sense in context.

Magic Eraser is much more interesting. Google has not fully revealed how it works under the hood, but we have a pretty good idea. The Pixel 6 Magic Eraser is likely informed by the same machine learning techniques used in its other products and services. For example, Google Photos can accurately identify cars, lamp-posts, people, boats and almost countless other scenes.

This intelligence has grown over the years thanks to the photos you upload to Google Photos – and also those maddening verification screens that make you clcik the squares that contain a fire hydrant or crosswalk.

In the Magic Eraser context, this lets a Pixel 6 not just identify objects, but also bring a degree of knowledge about what texture might be under the objects you choose to remove. Regardless of what image you try to process, Google has probably seen millions that look something like it.

This makes Magic Eraser a sort of next-generation healing brush that relies less on image data in the picture itself, and more on what similar images look like. It’s a relative of fully AI-generated and 'deep fake' tech, and this should let it evaluate where, for example, shadows should lie under removed objects – even if the algorithm 'thinks' in a more abstract way.

However, Google says it won’t work on all image elements. And the larger an object you try to remove, the more fake it is going to look. It is also not going to be useful in the contexts where we use Photoshop’s heal brush the most. More often than not, we use it to remove dust from images, or splotches caused by dust on the lens or sensor.

Photoshop's heal brush it also perfect for removing power lines, and tiny pieces of background scenery that make up a small part of the image, but can still alter the perception of it significantly when zapped. We’re not sure Magic Eraser will be able to deal with these.

Part of the art of the heal tool is in using multiple rounds of corrections, because by altering the image on the first pass you actually change the library of image data it uses on the second pass. While you can manually select areas to process with Magic Eraser, this is where the process may start to feel fiddly, and you may wish you had a mouse to hand.

Magic Eraser is certain to be a cool tool, but likely won’t fare that well with large objects near to the foreground/subject. We're certainly looking forward to trying it out, though.

- These are the best photo editing apps you can download right now

What is Face Unblur?

Face Unblur is sure to prove a useful feature for casual photography. It tries to solve one of the headaches of capturing a moving subject, particular indoors.

Unless you use a fast shutter speed, moving objects are going to appear blurry. We could blame the small sensors used in phones, but you’ll actually see the exact same effect with a large-sensor mirrorless camera if you shoot in “Auto”.

Face Unblur counteracts this through computational photography. And it’s one of the more interesting uses for it we’ve seen since Google’s Super Zoom from 2018.

When you shoot a Face Unblur image, both the Pixel 6's primary and ultra-wide cameras capture a shot. The primary camera uses the shutter speed it would normally opt for, based on the lighting conditions. The ultra-wide takes a picture at a faster shutter speed.

We don’t know how the ultra-wide approaches ISO sensitivity, but it can likely use a lower setting than it might use for a standard image, because this shot is there to look for areas of image contrast. It doesn’t necessarily need to produce a bright and satisfying-looking pic on its own.

The two exposures are merged, the image information in the ultra-wide shot used to firm-up detail and definition only in the face. And it knows where the face is because Google algorithms can do that without even trying these days.

Will Face Unblur be any good?

You can’t really recreate the exact effect of Face Unblur in Photoshop if you use a single image. Your main options are part of the Sharpen tools, but there is one made specifically for motion blur.

In the Smart Sharpen effect menu you’ll see a drop-down that includes 'motion blur'. To get the best effect you have to work out the angle of the motion blur and its distance in pixels. You can then dial in sharpening that can have a pretty good effect on the final image.

However, it's not quite the same as Face Unblur. Smart Sharpen affects the entire image, unless you make a new layer in which you cut out the face – and therefore will have a deleterious effect on the rest of the picture.

Face Unblur acts a bit more like a composite of shots taken with a camera’s burst mode if, say, you took the burst shot you liked the look of most as a whole, and then cut out and used the face from the shot that looked the sharpest.

Recreating the effect of Face Unblur is going to be difficult. And most photographers would likely discount the images off-hand before trying it anyway. As far as we can tell, this isn’t a specific mode you have to switch to either. If it’s used, or suggested, whenever required, that’s fantastic.

However, it looks like it will only work for human subjects for now. And as anyone who as ever tried to photograph a dog before knows: we definitely need it to work for dogs, too.

There’s also a slight question mark over its efficacy too. Even in Google’s own demo the final image looks sharper, but not pin-sharp. Still, it’s likely to be a major benefit for casual photography. But would you want to blow up a Face Unblur shot to A3 size and put it on your wall? Probably not.

- Photoshop vs Lightroom: what's the difference and which is the best for you?

What is Motion Mode?

If you take the concept of Portrait mode, where the background of an image is blurred to emulate the effect of a larger wide-aperture lens, and apply it to the photographic technique of 'panning', you end up with the Pixel 6’s Motion Mode. It uses blurring to add drama to your moving images.

However, what we’re actually emulating here in classic photography terms depends on what you shoot. In Google’s own demo of a cyclist at a velodrome, you’d get a similar effect by using a fast but not ultra-fast shutter speed, and moving the camera in-line with the cyclist’s motion as you shoot. That's 'panning' and it's a common technique used by sports photographers.

This means the rider ends up mostly in focus, but the background is blurred. And it is not an easy technique to get right. We last tried it at an F1 race in the pre-pandemic days, and mostly ended up with a lot of somewhat blurry images. We are therefore oh-so-down for this one.

That is not the only use of Motion Mode, though. You can also use it to take photos where the wider subject is static, but there’s motion within it. Google used a waterfall as an example, but it should also work for cars moving through streets at night.

We separate this style from the cyclist, because the technique you’d use in a dedicated camera is different. This second style would use a long exposure, not motion of the camera itself.

The way these photos are made is what joins the two together. As in other forms of computational photography, they are created using several merged exposures, where both the blurred parts and those that are kept sharp are deliberately controlled.

Will Motion Mode be any good?

This mode seems like a great addition to the Pixel 6 phones. It effectively automates techniques that you'd usually need skill or a tripod to achieve.

But can you recreate them in Photoshop? For the first example, of the cyclist, absolutely you can. There are several ways to do this. Our first place to head would be to duplicate the image as a second layer, use the Motion Blur effect to get the desired effect, set its transparency to perhaps 50% and then use the eraser tool to gradually take away the blurred part in the areas we wanted sharp.

The case of the waterfall is more complicated because we’re dealing with a subject that doesn’t just move, but changes as it moves. Try to get that in Photoshop and you’re starting to merge photo editing with digital painting.

The Pixel 6’s Motion Mode is far from the first to use multiple exposures to achieve these effects. There are countless apps that try to do this, and almost all of them are terrible. The recurring problem: they don’t account for hand movement, leaving the entire image looking messy or blurred.

Google’s version does. The remaining issue is how well Motion Mode can make several merged exposures look like a single one. In slow shutter photography, the sensor receives light information for up to, say, 30 seconds constantly. Motion mode records a series of quick snapshots, not a consistent stream of image information.

This a hurdle computational photography will need to surmount, just as correct depth mapping and object recognition remain barriers to an entirely legitimate-looking image in background-blurred Portrait photography. Still, Google is likely to do this much better than any app you can currently download for an Android phone.

Magic Eraser, Face Unblur and Motion Mode: final thoughts

An AI photo editing battle is underway, with Google and Adobe leading the way. While Photoshop has traditionally been a desktop app, Adobe is clearly aware of growing competition on smartphones – it recently announced that new AI editing tools coming to Lightroom would all work just as well on mobile devices as on desktop.

Is Google stealing its thunder with the Pixel 6 range's impressive new tools? Professionals will undoubtedly still want the precision and power of Photoshop and Lightroom for some time. Computational photography still has limits, and those are pretty apparent when you blow up a smartphone photo any larger than a phone screen.

But the Google Pixel 6's new tricks do, on paper at least, hold great promise for casual photographers. All three of its new modes either solve common issues in point-and-shoot photography, or let you get results close to those that aren’t easy to achieve without extra equipment and real skill.

It’s Google exploring the potential of computational photography at its best, because it doesn’t just rely on processing a single image until it’s unrecognisable from the original shot. We think Magic Eraser is most likely to show up the current limitations of this kind of photography. Calling it 'magic' doesn’t really help, either.

However, we're not going to turn our noses up at any of these tricks, particularly when plenty of photographers who might mock phone cameras use similar techniques, just with a more hands-on approach.

- These are the best Photoshop alternatives in 2021

Andrew is a freelance journalist and has been writing and editing for some of the UK's top tech and lifestyle publications including TrustedReviews, Stuff, T3, TechRadar, Lifehacker and others.