Micron launches 36GB HBM3E memory as it plays catch up with Samsung and SK Hynix as archrivals frantically rush towards the next big thing — HBM4 with its 16 layers, 1.65TBps bandwidth, and 48GB SKUs

Micron's 36GB HBM3E offers faster processing speeds with low power consumption

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

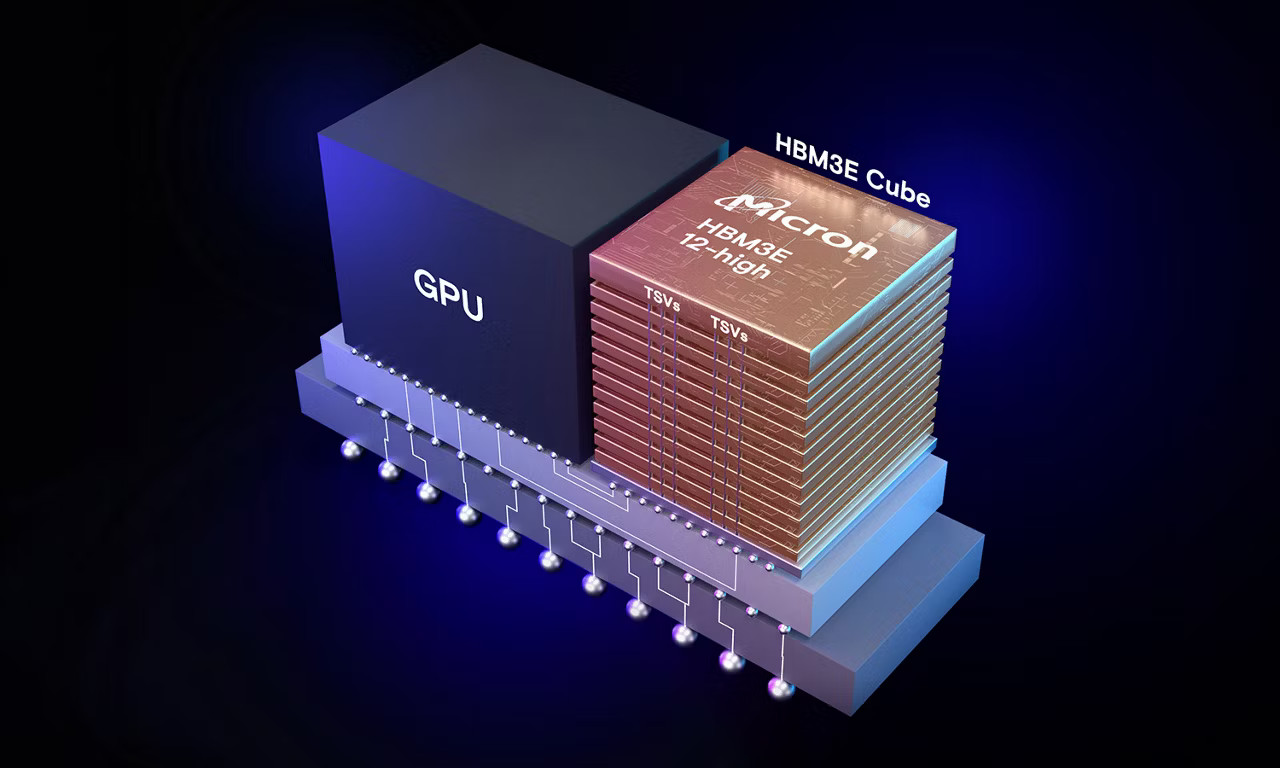

Micron has officially launched its 36GB HBM3E 12-high memory, signaling the company's entry into the competitive landscape of high-performance memory solutions for AI and data-driven systems.

As AI workloads grow more complex and data-heavy, the need for energy-efficient memory solutions becomes paramount. Micron's HBM3E aims to strike that balance, providing faster processing speeds without the high energy demands typically associated with such powerful systems.

Micron's 36GB HBM3E 12-high memory stands out for its increased capacity as it offers a 50% increase in capacity compared to current HBM3E offerings. This makes it a crucial component for AI accelerators and data centers managing large workloads.

Article continues below36GB HBM3E 12-High memory for AI acceleration

Micron says its offering delivers more than 1.2 terabytes per second (TB/s) of memory bandwidth, with a pin speed greater than 9.2 gigabits per second (Gb/s), ensuring rapid data access for AI applications. While Micron's new memory addresses the increasing demand for larger AI models and more efficient data processing, it also lowers power consumption by 30% compared to its rivals.

Although Micron’s HBM3E 12-high memory brings noteworthy improvements in terms of capacity and power efficiency, it enters a field where both Samsung and SK Hynix have already established dominance. These two rivals are aggressively pursuing the next big thing in high-bandwidth memory—HBM4.

HBM4 is expected to feature 16 layers of DRAM, offering more than 1.65TBps of bandwidth—far surpassing the capabilities of HBM3E. Additionally, with configurations reaching up to 48GB per stack, HBM4 will provide even greater memory capacity, enabling AI systems to handle increasingly complex workloads.

Despite the pressure from its competitors, Micron’s HBM3E 12-high memory remains a critical player in the AI ecosystem.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

The company has already begun shipping production-capable units to key industry partners for qualification, allowing them to incorporate the memory into their AI accelerators and data center infrastructures. Micron’s robust support network and ecosystem partnerships ensure that its memory solutions are integrated seamlessly into existing systems, driving performance improvements in AI workloads.

A notable collaboration is Micron’s partnership with TSMC’s 3DFabric Alliance, which helps optimize AI system manufacturing. This alliance supports the development of Micron’s HBM3E memory and ensures that it can be integrated into advanced semiconductor designs, further enhancing the capabilities of AI accelerators and supercomputers.

More from TechRadar Pro

- Take a look at some of the best RAM around

- Acer unveils the TravelMate P6 14 AI

- These are the best GPUs around right now

Efosa has been writing about technology for over 7 years, initially driven by curiosity but now fueled by a strong passion for the field. He holds both a Master's and a PhD in sciences, which provided him with a solid foundation in analytical thinking.

Become a TechRadar Insider

Become a TechRadar Insider