Samsung HBM roadmap shows Google could become Nvidia's fiercest competitor in AI by 2026, but I wonder what's happening to Microsoft

Nvidia is buying far more high-bandwidth memory than any of its rivals

- Nvidia stays king of AI chips, buying millions of HBM from Samsung in 2026

- Google’s TPU push makes it Nvidia's closest rival for HBM

- Amazon, AMD, and Microsoft fight for scraps while Intel fades into obscurity

High-bandwidth memory is essential for AI and cloud computing, offering high-speed data transfer and efficiency for demanding workloads.

The HBM space is currently dominated by South Korean memory giant SK Hynix and its chief rival (and neighbor) Samsung, although other players, like Micron, are also looking to grow their share of the market.

Tech giants like Nvidia, Google, Amazon, and Microsoft are among the biggest purchasers of HBM, and an internal Samsung roadmap spotted by ComputerBase provides a fascinating insight into their projected shopping lists for 2026.

Google in second place

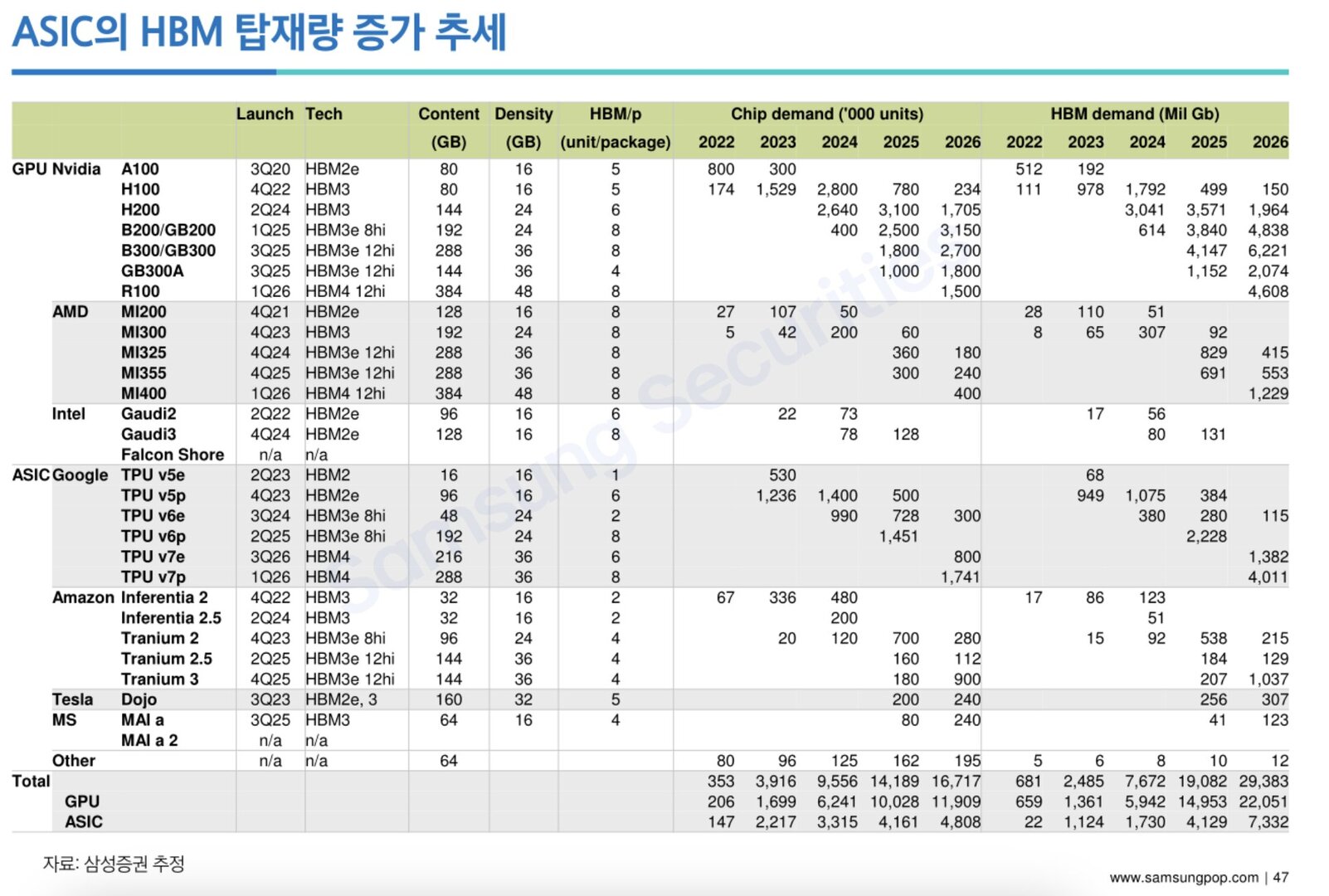

As you would expect, AI darling Nvidia remains the top purchaser of HBM from Samsung, with demand expected to grow from 5.8 million units in 2024 to 11 million units by 2026.

This increase is fueled by Nvidia's expanding lineup of AI accelerators, as the H200, equipped with 144GB of HBM3, is projected to need 1.7 million units in 2026. The B200 and GB200, using 192GB of HBM3e (8hi), will require 3.15 million units. The B300 and GB300, with 288GB of HBM3e (12hi), are forecast to take 2.7 million units, while the GB300A, using 144GB of HBM3e (12hi), is estimated to take 1.8 million units. The R100, expected to sport 384GB of HBM4 (12hi), will need 1.5 million units.

Based on the roadmap, which ComputerBase says "probably dates from the beginning of 2024", so is therefore not fully up to date, Google is expected to become the second-largest buyer of HBM, driven by its investment in Tensor Processing Units (TPUs).

Google's total HBM demand is projected to grow from 2.4 million units in 2024 to 2.8 million units by 2026. TPU v7p alone is expected to require 1.7 million units in 2026. Alphabet has allocated $75 billion in capital expenditures this year, a portion of which will likely be spent on HBM procurement and AI infrastructure.

Are you a pro? Subscribe to our newsletter

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Amazon’s demand for HBM is also growing steadily, as the company’s Inferentia and Trainium chips are projected to need 1.3 million units of HBM by 2026. Trainium 3 alone is expected to require 900,000 units that year.

AMD is largely seen as Nvidia’s closest rival, but it trails far, far behind. The company’s total HBM demand is projected to reach 820,000 chips in 2026. The MI400, expected to launch in early 2026 with 384GB of HBM4 (12hi), will be responsible for 400,000 units that year.

While Microsoft integrates HBM3 in its Maia AI chips, its total HBM demand is expected to reach just 240,000 units in 2026, indicating that it remains a minor player in this space.

Intel is also struggling to compete. The roadmap shows that its Gaudi3 accelerator is losing traction, with chip demand declining from 128,000 units in 2025 to none currently forecast in 2026 (although bear in mind that might have changed since this roadmap was created).

Of course, these numbers only cover HBM supplied by Samsung. Factoring in memory supplied by SK Hynix and Micron could paint a different picture, albeit one in which Nividia is still comfortably the top purchaser of HBM.

You might also like

Wayne Williams is a freelancer writing news for TechRadar Pro. He has been writing about computers, technology, and the web for 30 years. In that time he wrote for most of the UK’s PC magazines, and launched, edited and published a number of them too.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.