Nvidia G-Sync vs AMD FreeSync

It's best to let rip when tearing frames

Attention all gamers, the format wars are back: the battle of G-Sync and FreeSync is in full swing, especially now that Nvidia has opened up its G-Sync spec. Now, while this does mean that both Team Red and Team Green have solved the problem of screen tearing and frame stuttering in the best PC games, it also means that the market for the best gaming monitors is obfuscated even more.

What's the problem?

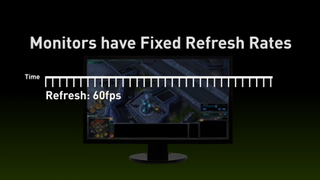

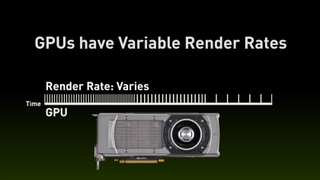

The source of displaying high-performance PC games is that monitors have a constant refresh rate, e.g. 75Hz means that the screen is updated 75 times per second. Meanwhile, graphics cards (GPUs) render new images at a variable rate, depending on the computational load they're bearing.

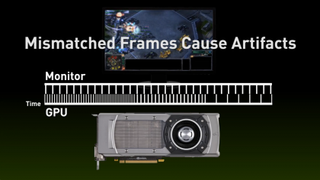

When the refresh rate and frame rate don’t match, the current frame being rendered on the GPU can become unsynchronized. So, partway through the process of sending a new frame to the display, the graphics card will move on to the next frame.

This switch appears as a discontinuity in what you see on-screen. Usually, the discontinuity travels across the screen on concurrent frames as the phase difference between the GPU and monitor reduces. This discontinuity is what we call tearing and in extreme cases there can be several tears at once.

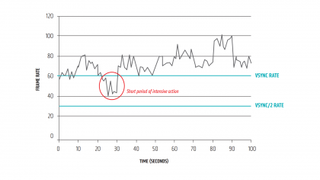

Most PC games have a setting called VSync, which can reduce the tearing effect. VSync effectively limits the frame rate of the GPU, such that if one particular frame has taken too long to be rendered on the GPU and it misses its slot on the monitor, the GPU will delay sending any graphics data to the monitor until the next screen refresh.

Brilliant! Problem solved then? Well, not quite. VSync isn’t perfect, and the delay in sending a frame to the monitor causes stuttering and input lag during the times that the GPU is under the most processing load, which is also the time a gamer needs the most response. Hence, many gamers choose to disable VSync in order to get the most responsive system, despite the ugly tearing effect. So, while VSync was the only remedy to tearing, many gamers choose to disable it.

Nvidia to the rescue, kind of

Since 2014, Nvidia has been promoting its solution to the VSync problem, that it has dubbed G-Sync. The basic concept with G-Sync is that the GPU actually controls the refresh rate of the monitor. By doing this, the monitor and GPU are always in sync and therefore there is never any tearing or stuttering. Prior to this, Nvidia had already been working on Adaptive VSync.

Get daily insight, inspiration and deals in your inbox

Sign up for breaking news, reviews, opinion, top tech deals, and more.

As PC Perspective notes, there are three regimes in which any variable refresh rate GPU/monitor system needs to operate within: A) when the GPU's frames per second is below the minimum refresh rate of the monitor. B) When the GPU's frames per second is between the minimum and maximum refresh rate of the monitor. C) When the GPU's frames per second is greater than the maximum refresh rate of the monitor.

Case B mentioned above is straightforward – the GPU simply sets refresh rate of the monitor to equal its frames per second.

When a G-Sync compatible GPU and monitor are operating in case C, Nvidia has decided that the GPU should default to VSync mode. However, in case A, G-Sync sets the monitor's refresh rate to be an integer multiple of the current frames per second coming from the GPU. This is similar to the delaying frames strategy of VSync, but has the advantage of keeping in step with the monitor because of the whole number multiplier.

The (somewhat literal) price of this solution is that Nvidia needs to have a proprietary chip in every G-Sync compatible monitor. This has the undesirable result of G-Sync monitors incurring increased costs due to requiring the extra electronics and paying the associated license fees to Nvidia. Finally, it is not supported by AMD GPUs either.